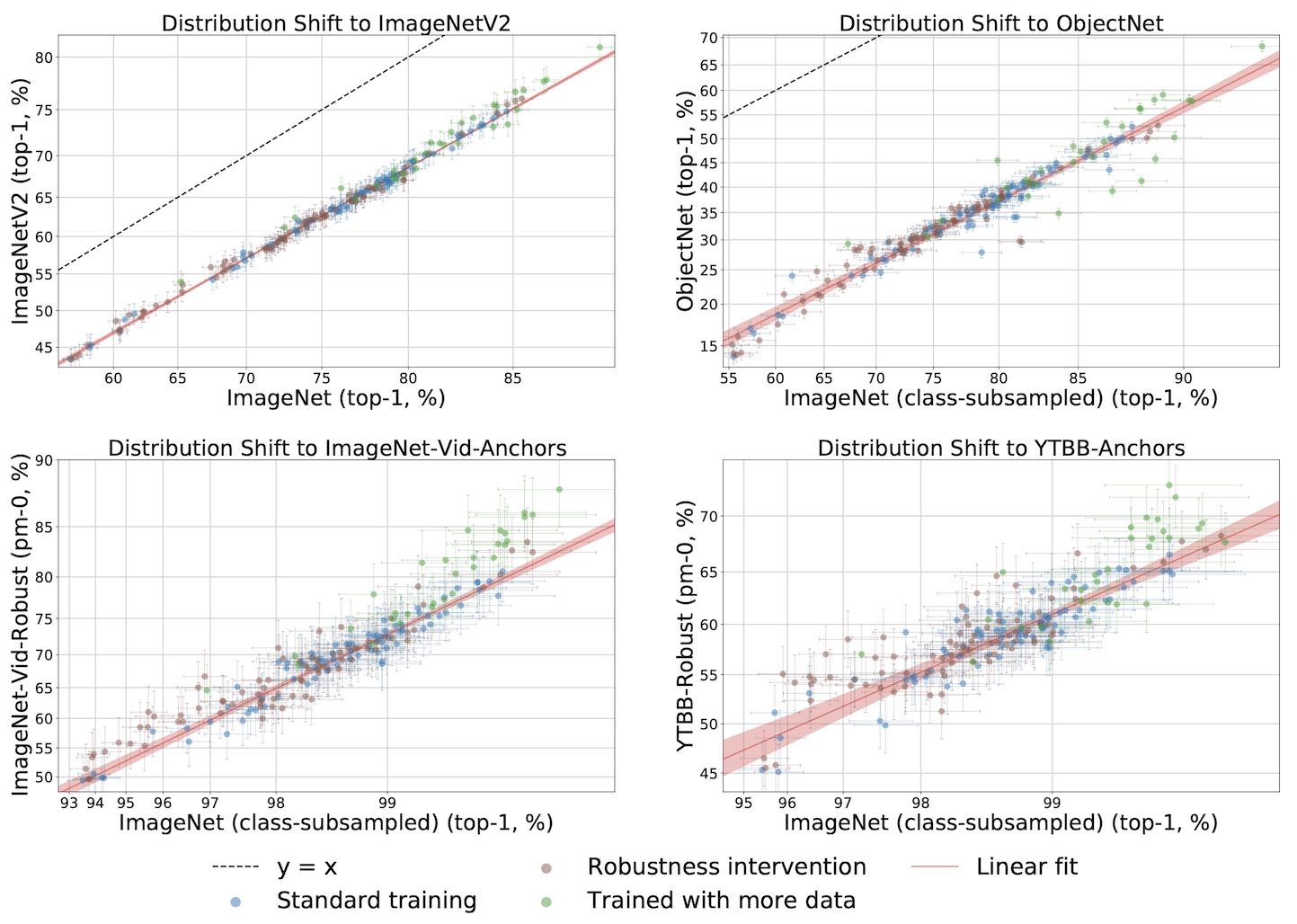

(Right) Testing robustness by plotting models in the testbed against a few natural distribution shifts.

Check out our interactive website to play with the data and our paper to read our analysis.

from torchvision.models import resnet50

from registry import registry

from models.model_base import Model, StandardTransform, StandardNormalization

registry.add_model(

Model(

name = 'resnet50',

transform = StandardTransform(img_resize_size=256, img_crop_size=224),

normalization = StandardNormalization(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]),

classifier_loader = lambda: resnet50(pretrained=True),

eval_batch_size = 256,

)

)

python eval.py --gpus 0 1 --models resnet50 densenet121 --eval-settings val imagenetv2-matched-frequency

@inproceedings{taori2020measuring,

title={Measuring Robustness to Natural Distribution Shifts in Image Classification},

author={Rohan Taori and Achal Dave and Vaishaal Shankar and Nicholas Carlini and Benjamin Recht and Ludwig Schmidt},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2020},

url={https://arxiv.org/abs/2007.00644},

}